Augmenting supervised neural networks with unsupervised objectives for large-scale image classification

왠지 중요한것 같아서라기보다 CVPR2017 best paper가 인용한 논문이 한국사람의 논문이라서 봄

학습할 때 gradient vanishing, explosion등 문제를 해결하는 방법의 일종인듯.. 괜히읽었다. Augmented autoencoder의 variation 세가지를 제안함.

목차

Abstract

we investigate joint supervised and unsupervised learning in a large-scale setting by augmenting existing neural networks with decoding pathways for reconstruction.

1. Introduction

Unsupervised로 pre-training 한참 하다가 요즘엔 아무도 안한다. There are many (supervised-learning) technics which were not limited by the modeling assumptions of unsupervised methods.

Supervised랑 unsupervised를 엮으려는 시도들이 조금 있었으나 대량의 데이터에 관한 것이 아니었다. Zhao et al.[1]이 Zeiler et al.[2]의 “unpooling” operator를 사용해 “what-where” autoencoder(SWWAE)를 제안했고 CIFAR with extended unlabeled data에서 괜찮은 결과를 얻었으나, 그보다 더 큰 데이터에서는 그렇지 못했다.

Classification network의 일부를 떼어내고 그것을 그대로 뒤집(은 후 augmentation을 더해 얻)은 것을 (reconstructive) decoding pathway로 쓰겠다. 이 autoencoder를 finetuning하면, 앞단(원래 classification에 쓰이던 부분)의 성능도 향상된다.

We will provide insight on the importance of the pooling switches and the layer-wise reconstruction loss.

2. Related work

- sparse coding

- dictionary learning

- autoencoder

3.Methods

3.1. Unsupervised loss for intermediate representations

원래 보통의 loss는 $$\frac{1}{N}\sum^N_{i=1} C(x_i, y_i), C(x,y) = l(a_{L+1}, y)$$ where

- \(a_L\) :\(L\)th layer’s output,

- \(y\) : ground truth,

- \(l\) : cross-entropy loss,

- \(C\) : classification loss.

이지만, net 중간중간에 loss를 만들도록 적당히 뭔가를 넣으면(3.2에 나온다)

$$\frac{1}{N}\sum^N_{i=1} (C(x_i, y_i)+ \lambda U(x_i))$$

이렇게 할 수 있다.그냥 뻔한 이야기

3.2. Network augmentation with autoencoders

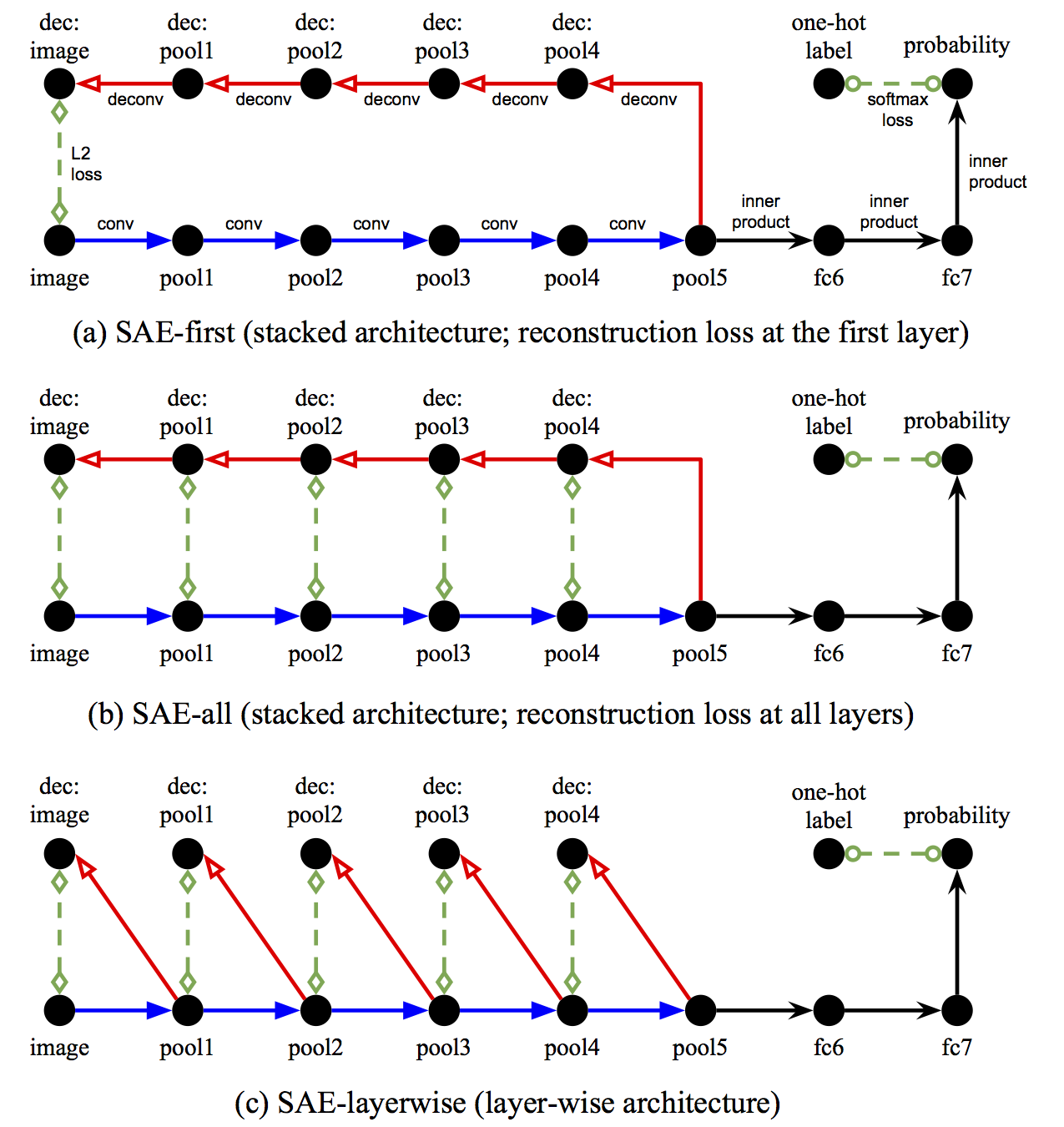

아래가 저자들이 제안한 것.

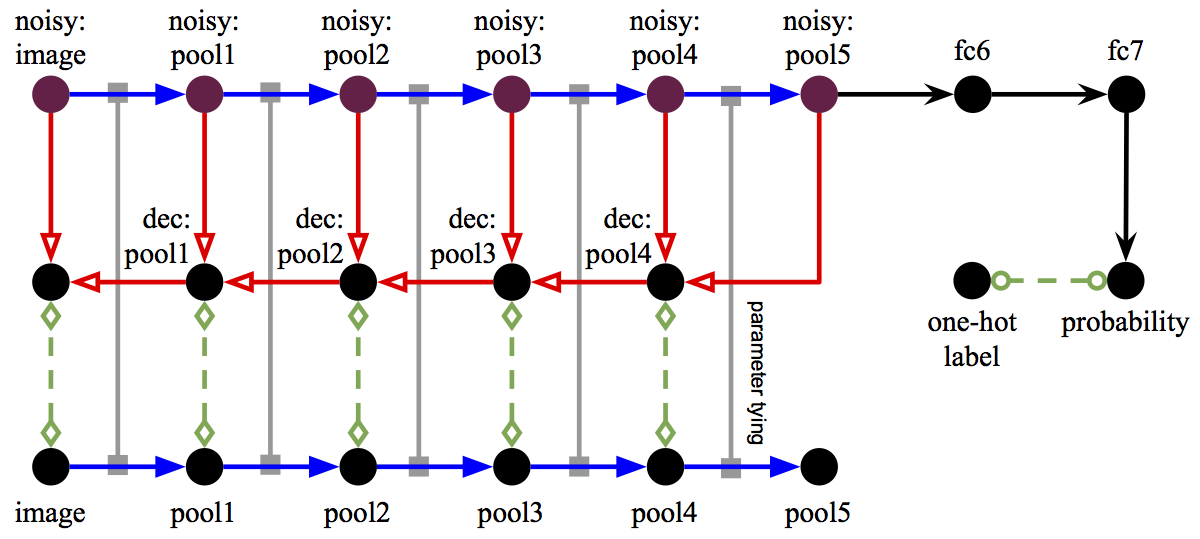

아래는 ladder net[3]

4. Experiments

- ImageNet ILSVRC 2012 dataset

- mainly based on the 16-layer VGGNet

- partially used AlexNet

4.1. Training procedure

Steps

- 초기화 : encoding pathway는 pretrained, decoding은 Gaussian

- encoding을 고정시킨 후 SAE-layerwise net을 학습시킴

- SAE-first/all은 SAE-layerwise를 pretrained net으로 해서 학습시킴

- encoding/decoding 모두 reduce learning rate으로 finetuning

SGD momentum 0.9 꽤나 크게 줬네? (We found the learning rate annealing not critical for SAE-layerwise pretraining. Proper base learning rates could make it sufficiently converged within 1 epoch.)

4.2. Image reconstruction via decoding pathways

Conclusion

- the pooling switch connections between the encoding and decoding pathways were helpful, but not critical for improving the performance of the classification network in large- scale settings

- the decoding pathways mainly helped the supervised objective reach a better optimum

- the layer-wise reconstruction loss could effectively regularize the solution to the joint objective.

References

- ↑ Zhao, J., Mathieu, M., Goroshin, R., and Lecun, Y. Stacked what-where auto-encoders. arXiv:1506.02351, 2015.

- ↑ Zeiler, M., Taylor, G., and Fergus, R. Adaptive deconvolu- tional networks for mid and high level feature learning. In ICCV, 2011.

- ↑ Rasmus, A., Valpola, H., Honkala, M., Berglund, M., and Raiko, T. Semi-supervised learning with ladder network. In NIPS, 2015.